A few longer pieces on AI - Issue #342

For the past few weeks, I've been dabbling with a version of Open AI's GPT-4. My company gave me access to a screen like the old AIM instant messenger: along the left column are a few conversations and in the main area I type in text and wait for a response from the other party. It's just like high school, except the other side of the conversation uses some automation and a large language model to create its replies.

I've been asking it for help in some of my creative challenges: writing copy for my church's website, helping me prepare outlines for longer recommendations or emails I need to send, etc. In the rare case where I have nothing to say, it can helpfully get me going. It's usually not all that interesting. I asked it why people might not want to go to church and got this reply, "Different Beliefs or Non-religious: Some individuals may not align with the theological beliefs or doctrines upheld by the church." I renamed the bot Captain Obvious.

Thus far, nothing the bot has produced has been wrong or useless. But nothing has been all that helpful. Half a minute and I could come up with the same thing. Moreover, the writing it produces is entirely average. This isn't unexpected: the model looks at all writing everywhere for inputs. But making my writing more anodyne isn't useful. Good writing is sharp and clear. Any of our great prose stylists, Orwell and E. B. White come to mind, would have a field day with the idea that what we need is an easier way to generate bad writing.

That I've been non-plussed shouldn't surprise you: I'm a middle aged dude. Being nonplussed is my specialty. The more surprising thoughts are like the ones I linked to below: people who believe this new gadget will "save the world" or end it. The middle case is far more likely, but the partisans are far more interesting to read.

Reading

AI Will Save the WorldArtificial intelligence won’t end civilization, argues Marc Andreessen. Just the opposite. It is quite possibly the best thing human beings have ever created. |

|

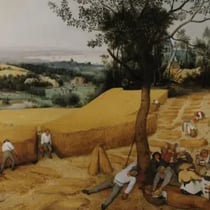

Rage Against the MachineTechnology is our new god. What would a refusal to worship look like? Paul Kingsnorth offers a vision of resistance. |

|

Render Unto the MachineThere’s a particular type of AI-related story that I keep encountering involving the far more mundane, actual uses to which generative AI models are being put by perfectly ordinary people and institutions. |

|